This research is funded by a grant by the Deutsche Forschungsgemeinschaft (DFG, Grant Ref.: Schw 511/25-1, within the DFG SPP 2392 Visual Communication).

C.I vE. has been supported by the German National Academic Foundation (‘Studienstiftung des Deutschen Volkes’) from April 2019 until September 2022.

Description

This project aims to deepen our understanding of audiovisual perception in both hearing individuals and cochlear implant (CI) users, particularly focusing on the neurocognitive mechanisms underlying emotional communication. CIs are sensory prostheses designed to provide individuals with sensorineural deafness a modified sense of sound. While speech perception has been the main goal of CI technology – and has generally been treated as a benchmark for success of intervention, leading to greatly improved speech perception with CIs over the last few decades – emotion perception in CI users remains a widely underexplored research area. Importantly, the relevance of thoroughly studying emotion perception in CI users is underscored by consistent positive correlations between this perceptual ability and quality of life. In contrast, previous research has demonstrated only a relatively weak relationship between speech perception and perceived quality of life in CI users. Although voices are often produced by speakers who are visible during communication, and emotions are multimodal in nature, audiovisual integration of vocal and facial emotions in CI users has largely been overlooked in previous research.

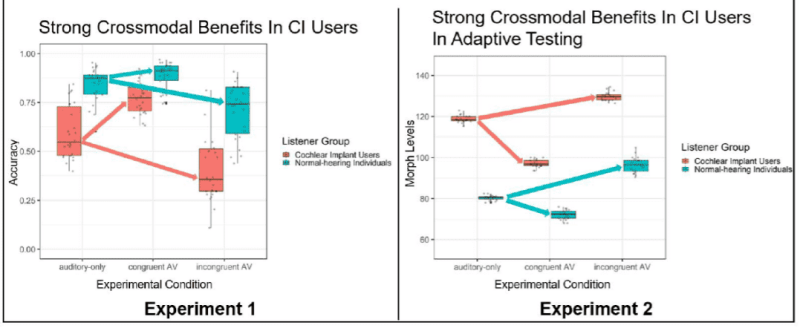

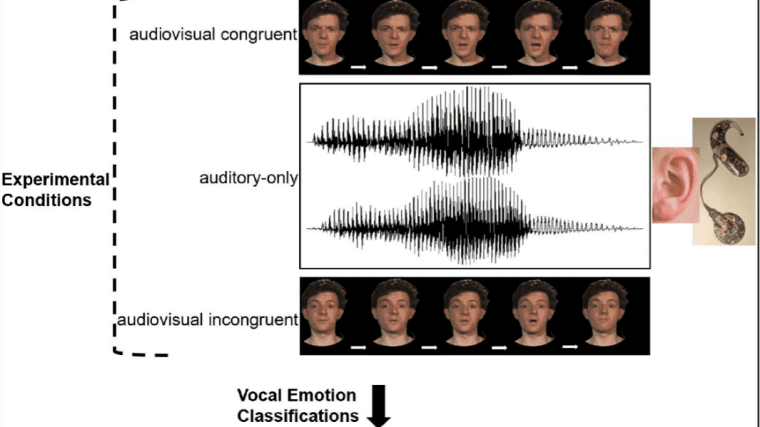

Utilizing advanced methods such as parameter-specific voice morphing and synchronized audiovisual stimuli, we investigate in this research project how CI users integrate vocal and facial information to perceive emotions. Moreover, we explore how crossmodal plasticity in the brain, driven by visual inputs, might enhance CI users' multimodal perception. The project also examines the temporal dynamics of audiovisual integration in emotion perception, hypothesizing that visual information may offer a processing advantage, especially for CI users. Finally, we develop and evaluate perceptual training interventions aimed at improving CI users’ vocal emotion perception abilities, and, ultimately, their quality of life.

For further information, please visit: https://vicom.info/projects/audiovisual-perception-of-emotion-and-speech-in-hearing-individuals-and-cochlear-implant-users/

Resources

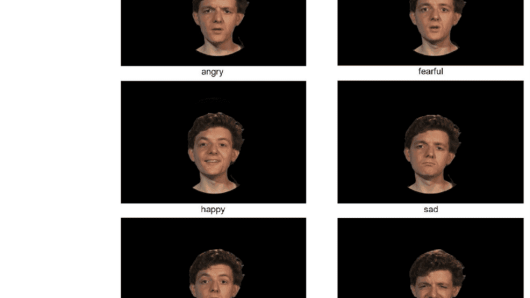

We developed JAVMEPS, an audiovisual database for emotional voice and dynamic face stimuli, with voices varying in emotional intensity, in the context of this project (von Eiff et al., 2024, Behavior Research Methods).

Publications

- von Eiff, C. I., Kauk, J., & Schweinberger, S. R. (2024). The Jena Audiovisual Stimuli of Morphed Emotional Pseudospeech (JAVMEPS): A database for emotional auditory-only, visual-only, and congruent and incongruent audiovisual voice and dynamic face stimuli with varying voice intensities. Behavior Research Methods, 1-13.

- von Eiff, C. I., Frühholz, S., Korth, D., Guntinas-Lichius, O., & Schweinberger, S. R. (2022). Crossmodal benefits to vocal emotion perception in cochlear implant users. iScience, 25(12).

- Schweinberger, S. R., & von Eiff, C. I. (2022). Enhancing socio-emotional communication and quality of life in young cochlear implant recipients: Perspectives from parameter-specific morphing and caricaturing. Frontiers in Neuroscience, 16, 956917.

- von Eiff, C. I., Skuk, V. G., Zäske, R., Nussbaum, C., Frühholz, S., Feuer, U., Guntinas-Lichius, O., & Schweinberger, S. R. (2022). Parameter-Specific Morphing Reveals Contributions of Timbre to the Perception of Vocal Emotions in Cochlear Implant Users. Ear and hearing, 43(4), 1178–1188. https://doi.org/10.1097/AUD.0000000000001181

- Gregori, A., Amici, F., Brilmayer, I., Ćwiek, A., Fritzsche, L., Fuchs, S., ... & von Eiff, C. I. (2023, July). A roadmap for technological innovation in multimodal communication research. In International Conference on Human-Computer Interaction (pp. 402-438). Cham: Springer Nature Switzerland.

- Henlein, A., Bauer, A., Bhattacharjee, R., Ćwiek, A., Gregori, A., Kügler, F., … & von Eiff, C. I. (2024, June). An Outlook for AI Innovation in Multimodal Communication Research. In International Conference on Human-Computer Interaction (pp. 182-234). Cham: Springer Nature Switzerland.

-

Christian Dobel

Department of Otorhinolaryngology, Jena University Hospital, Jena, Friedrich Schiller University Jena, GermanyDFG SPP 2392 Visual Communication (ViCom), Frankfurt am Main, Germany

Contact via: Christian.Dobel@med.uni-jena.de

-

Volker Gast

Department of English and American Studies, Friedrich Schiller University Jena, Germany

DFG SPP 2392 Visual Communication (ViCom), Frankfurt am Main, GermanyContact via: volker.gast@uni-jena.de

-

Stefan R. Schweinberger

Department for General Psychology and Cognitive Neuroscience, FSU Jena, stefan.schweinberger@uni-jena.de