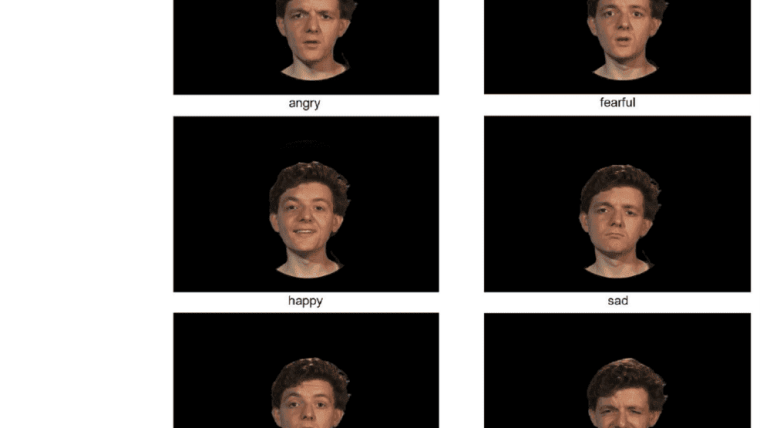

JAVMEPS is an audiovisual (AV) database for emotional voice and dynamic face stimuli, with voices varying in emotional intensity. JAVMEPS includes 2256 stimulus files comprising

- (A) recordings of 12 speakers, speaking four bisyllabic pseudowords with six naturalistic induced basic emotions plus neutral, in auditory-only, visual-only, and congruent AV conditions. It furthermore comprises

- (B) caricatures (140%), original voices (100%), and anti-caricatures (60%) for happy, fearful, angry, sad, disgusted, and surprised voices for eight speakers and two pseudowords.

- Crucially, JAVMEPS contains (C) precisely time-synchronized congruent and incongruent AV (and corresponding auditory-only) stimuli with two emotions (anger, surprise),

- (C1) with original intensity (ten speakers, four pseudowords),

- (C2) and with graded AV congruence (implemented via five voice morph levels, from caricatures to anti-caricatures; eight speakers, two pseudowords).

We collected classification data for Stimulus Set A from 22 normal-hearing listeners and four cochlear implant users, for two pseudowords, in auditory-only, visual-only, and AV conditions. Normal-hearing individuals showed good classification performance (McorrAV = .59 to .92), with classification rates in the auditory-only condition ≥ .38 correct (surprise: .67, anger: .51). Despite compromised vocal emotion perception, CI users performed above chance levels of .14 for auditory-only stimuli, with best rates for surprise (.31) and anger (.30).

We anticipate JAVMEPS to become a useful open resource for researchers into auditory emotion perception, especially when adaptive testing or calibration of task difficulty is desirable. With its time-synchronized congruent and incongruent stimuli, JAVMEPS can also contribute to filling a gap in research regarding dynamic audiovisual integration of emotion perception via behavioral or neurophysiological recordings.

For further information, please note the associated publication

- von Eiff, C. I., Kauk, J., & Schweinberger, S. R. (2024). The Jena Audiovisual Stimuli of Morphed Emotional Pseudospeech (JAVMEPS): A database for emotional auditory-only, visual-only, and congruent and incongruent audiovisual voice and dynamic face stimuli with varying voice intensities. Behavior Research Methods, 1-13.

Terms of Use

All stimulus files of JAVMEPS are freely available for the scientific community via the following link: https://osf.io/r3xqw/External link.

Please cite the following reference when you use JAVMEPS:

- von Eiff, C. I., Kauk, J., & Schweinberger, S. R. (2024). The Jena Audiovisual Stimuli of Morphed Emotional Pseudospeech (JAVMEPS): A database for emotional auditory-only, visual-only, and congruent and incongruent audiovisual voice and dynamic face stimuli with varying voice intensities. Behavior Research Methods, 1-13.

Still-image frame examples from JAVMEPS showing a single speaker displaying different emotions

Screenshot: Celina von Eiff-

Julian Kauk

Department for General Psychology and Cognitive Neuroscience, FSU Jena

Contact via: julian.kauk@uni-jena.de

-

Stefan R. Schweinberger

Department for General Psychology and Cognitive Neuroscience, FSU Jena, stefan.schweinberger@uni-jena.de